Cencurity

Real-time security for AI-generated code inside VS Code.

The problem

AI coding tools generate code instantly.

But security checks happen too late — during review or after execution.

This creates a blind spot where insecure code can slip through unnoticed.

What Cencurity does

Cencurity sits between your IDE and the model.

It inspects generated code in real-time and blocks unsafe patterns before they reach your system.

What it does

- Opens the Cencurity Security Center inside VS Code.

- Routes supported LLM traffic through a local protection proxy.

- Keeps your existing provider API key where it already lives.

- Verifies routing automatically when protection is enabled.

- Shows live audit activity for real protected requests.

Quickstart

- Install the extension.

- Open Command Palette Ctrl+⇧Shift+P or ⌘Command+⇧Shift+P (macOS)

Cencurity: Enable Protection.

- Select your LLM provider.

- Open Command Palette Ctrl+⇧Shift+P or ⌘Command+⇧Shift+P (macOS)

Cencurity: Open Security Center.

That's it — protection is now active.

Features

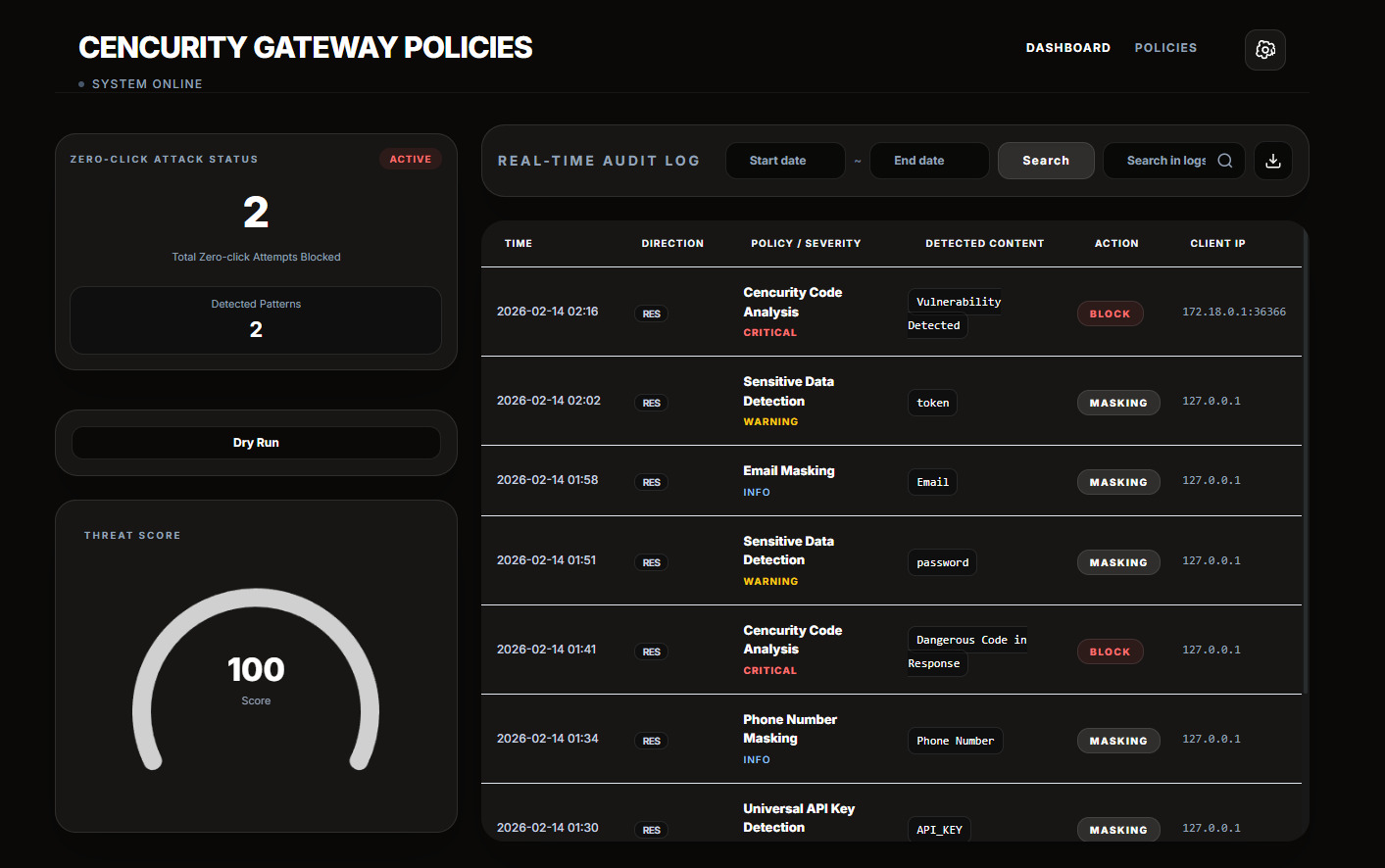

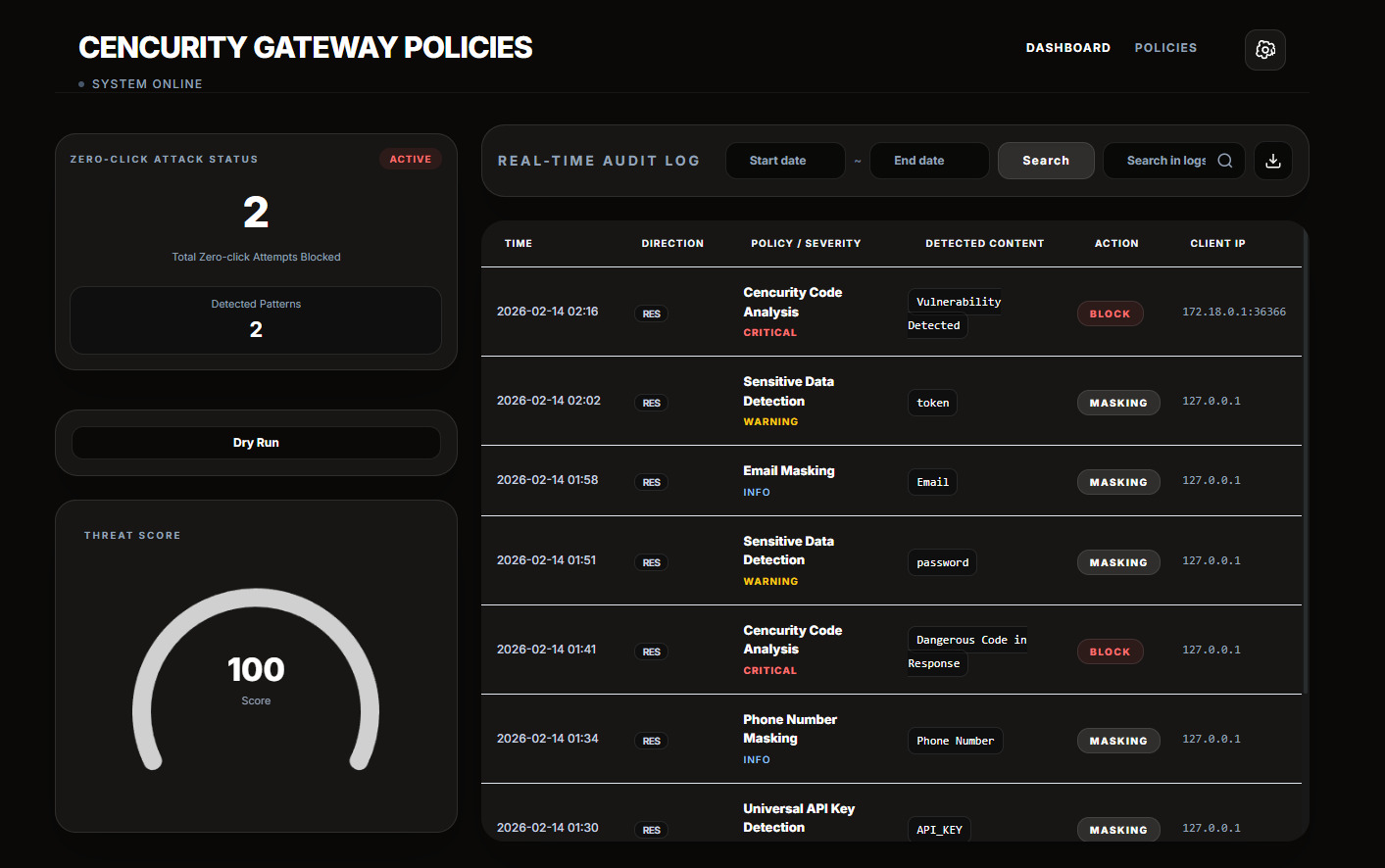

Real-time Log Analysis

- Inspect generated code as it flows through the system

- See exactly what was detected and why

Dry Run Mode

- Simulate execution without risk

- Understand behavior before anything runs

Zero-click Attack Detection

- Detect dangerous patterns instantly

- Block risky operations like

subprocess, shell execution, etc.

Command Palette

Search for cencurity in the VS Code Command Palette to access the main actions:

Cencurity: Open Security Center — open the Security Center dashboard inside VS CodeCencurity: Enable Protection — turn protection on and select your LLM providerCencurity: Disable Protection — turn protection off and restore previous supported routing settingsCencurity: Test Protection — verify that requests are reaching the local proxyCencurity: Show Runtime Info — inspect the local runtime and protection stateCencurity: Install or Update Core — install or refresh the local core runtime

Supported providers

- OpenAI

- Anthropic

- Gemini

- OpenRouter

- Other OpenAI-compatible LLMs

How it works

IDE → Cencurity Proxy → LLM Provider

- Your API key stays in your IDE.

- Requests are routed through a local proxy.

- Code is analyzed in real-time before execution.

What is CAST?

CAST (Code-Aware Security Transformation) protects a moment that existing tools don't cover.

| Model |

When it runs |

Main job |

Typical result |

| CAST |

while the model is still writing code |

stop unsafe output before it reaches the developer |

allow, redact, block |

| SAST |

after code already exists |

scan code for vulnerabilities |

findings after generation |

| DAST |

against a running app |

test runtime behavior |

runtime issues after deployment or staging |

| IAST |

inside an instrumented app |

watch real execution paths |

internal runtime findings |

The point is not that CAST replaces SAST.

The point is that CAST protects a different moment: while code is being generated.

Cencurity is the first tool built on CAST.

Notes

- Routing applies to supported env-based routing paths.

- Some extensions may bypass VS Code environment settings and not route through the proxy.

- The connector can use a local workspace runtime during development or a released core artifact in distribution builds.