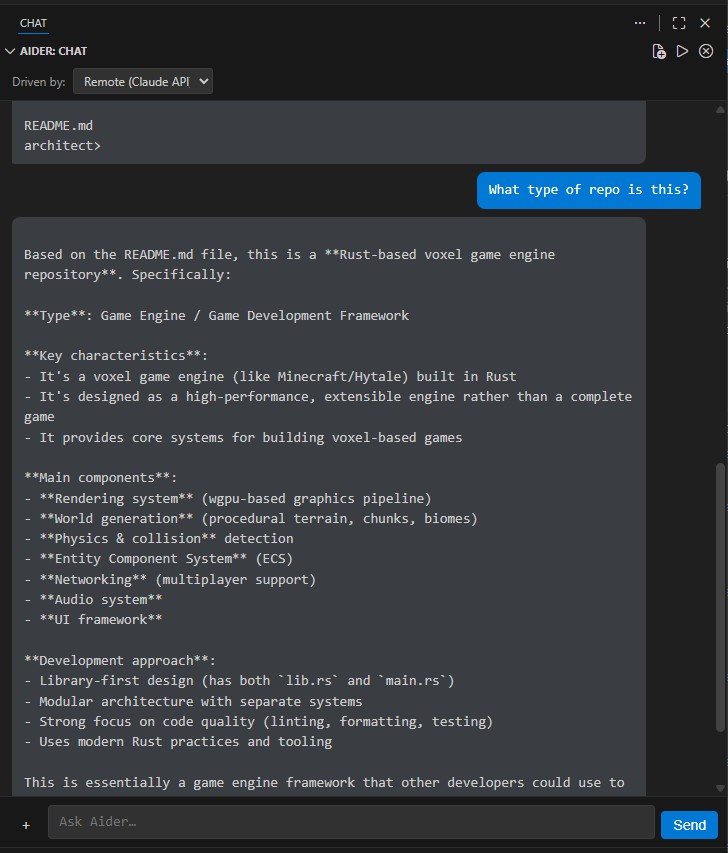

Aider Chat

Chat with Aider directly inside VS Code / Cursor. Why Aider Chat?Running Aider in a terminal works, but it means constant window switching, no file context integration, and no way to toggle providers on the fly. Aider Chat fixes all of that:

InstallationFrom the MarketplaceSearch "Aider Chat" in the VS Code Extensions panel, or: From SourcePrerequisites

Getting Started

That's it — you're pair-programming with AI. Switching ProvidersUse the "Driven by" dropdown at the top of the chat panel to switch between:

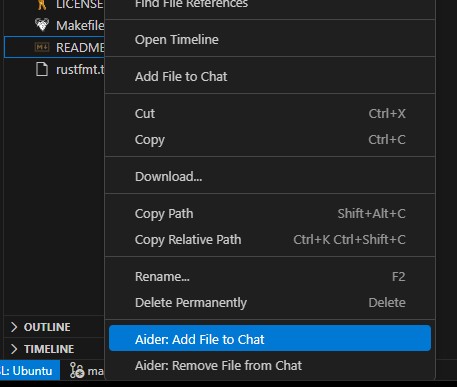

When you switch to Remote, Ollama shuts down automatically to free your RAM/VRAM. When you switch back to Local, it starts right back up. Adding Files to ContextGive Aider the files it needs to work with. Three ways to do it: Right-click in the Explorer or Editor — select "Aider: Add File to Chat"

Click the + button next to the chat input to open a multi-file picker. Command palette — To remove a file from context, right-click it and choose "Aider: Remove File from Chat". Commands

ConfigurationAPI keys (

|

| Setting | Default | Description |

|---|---|---|

aiderAgent.provider |

"local" |

Active backend: "local" or "remote" |

aiderAgent.local.model |

"ollama_chat/qwen2.5-coder:14b" |

Ollama model for Aider |

aiderAgent.local.apiBase |

"http://localhost:11434" |

Ollama API URL |

aiderAgent.remote.model |

"claude-sonnet-4-20250514" |

Claude model identifier |

aiderAgent.remote.apiKey |

"" |

Anthropic API key (if not using .env) |

aiderAgent.extraArgs |

[] |

Extra CLI flags passed to Aider |

Model Sizing Guide

Not sure which local model to pick? Here's a quick guide:

| Your RAM | Recommended model | Ollama tag |

|---|---|---|

| ~20 GB free | Qwen 2.5 Coder 14B (~9 GB) | ollama_chat/qwen2.5-coder:14b |

| ~52 GB free | Qwen 2.5 Coder 32B (~20 GB) | ollama_chat/qwen2.5-coder:32b |

| Any | DeepSeek Coder V2 16B (~9 GB) | ollama_chat/deepseek-coder-v2:16b |

Example: Claude Opus 4

# .env

ANTHROPIC_API_KEY=sk-ant-api03-your-key-here

// .vscode/settings.json

{

"aiderAgent.provider": "remote",

"aiderAgent.remote.model": "claude-opus-4-20250514"

}

Contributing

See DEVELOPMENT.md for architecture diagrams, build instructions, testing setup, and CI/CD details.

License

MIT